A Patch-Based Voting Framework with MobileNetV2 for Identifying AI-Generated Visual Content in Digital Poster

Keywords:

MobileNetV2, Patch-Based Analysis, AI-Generated Content Detection, Human Involvement Classification, Deep Learning, Model Selection StrategyAbstract

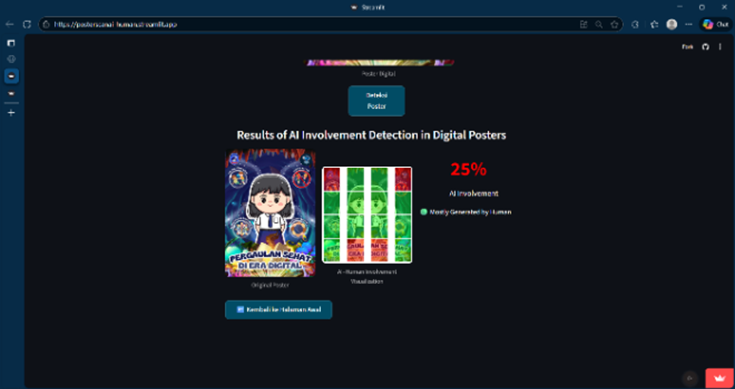

This study aims to detect the involvement of the use of AI in digital posters. The algorithm used for this purpose is to use the MobileNetV2 network architecture with evaluation scenarios to determine the best model configuration on the detection of the use of AI involved in digital posters. In this study, a method is proposed that uses a patch-based voting mechanism to model local visual patterns that serve as evidence of creator presence. The dataset used totaled 208 posters, half of which were man-made and the other half were AI-generated. The proposed model based on the CRISP-DM framework consists of six steps: (1) business understanding; (2) understanding data; (3) data preparation; (4) modeling; (5) evaluation; (6) Application. Various permutations of MobileNetV2 training including learning rate tuning, unlocked layers, and enriched data are explored to find the most reproducible architecture with the highest performance. The best models are selected based on their performance on validation data and voting uniformity across patches. The results of the model showed a training accuracy of 94.74%, a validation accuracy of 91.18%, and a test accuracy of 90.48% with a strong ability to distinguish visual features between AI and human work. These results suggest that the selection of appropriate training cases for MobileNetV2 along with a patch-based approach is a good way to filter the influence of AI in contemporary visual content.

References

[1] T. Z. Alam and J. Haikal, “Dampak Produksi Desain Grafis Pada Penggunaan Teknologi Artificial Intelligence (AI) Dengan Menggunakan Grounded Theory,” Jurnal Seni Nasional Cikini, vol. 10, no. 1, pp. 15–26, Jun. 2024, doi: 10.52969/jsnc.v10i1.265.

[2] M. Muhaemin and G. Artikel, “Analisis Pemanfaatan Artificial Intelligence (AI) sebagai Referensi dalam Desain Komunikasi Visual Analysis of Utilizing Artificial Intelligence (AI) as a Reference in Visual Communication Design Article Info ABSTRAK,” SASAK: Desain Visual dan Komunikasi, vol. 5, no. 1, pp. 71–80, 2023, doi: 10.30812/sasak.v5i1.2966.

[3] Y. A. Reza and H. Kristanto, “Perkembangan Teknologi AI dalam Desain Grafis: Sebuah Tinjauan Literatur,” 2024.

[4] Chen Zihan, Chen Lianghong, Zhao Zhiyuan, and Wang Yue, AI Illustrator: Art Illustration Generation Based on Generative Adversarial Network. IEEE, 2020. doi: 10.1109/ICIVC50857.2020.9177494.

[5] A. W. Fadhlan Ramdhani and A. Susanti, “Pemanfaatan Teknologi Openai Dall-E 2 dalam Meningkatkan Kreativitas Desainer Grafis pada Komunitas Desain Grafis Indonesia,” Jurnal Bisnis dan Komunikasi Digital, vol. 1, no. 2, p. 8, Nov. 2023, doi: 10.47134/jbkd.v1i2.1916.

[6] L. Bellaiche et al., “Humans versus AI: whether and why we prefer human-created compared to AI-created artwork,” Cogn Res Princ Implic, vol. 8, no. 1, Dec. 2023, doi: 10.1186/s41235-023-00499-6.

[7] D. A. Panjaitan, M. Aulia, Y. Juliana, M. Afdol, and R. Kurniawan, “Persepsi Mahasiswa terhadap Isu Penggunaan Ghibli Art AI di Media Sosial sebagai Mata Pencaharian,” JOSH : Journal of Sharia, vol. 04, no. 02, pp. 167–178, 2025, doi: 10.55352/josh.v4i02.1927.

[8] D. B. Santosa, A. Wahana, and W. Uriawan, “Implementation of Convolutional Neural Network Using MobileNetV2 to Distinguish Human and Artificial Intelligence Painting,” Jurnal Teknik Informatika (Jutif), vol. 6, no. 1, pp. 441–452, Feb. 2025 doi: 10.52436/1.jutif.2025.6.1.3827.

[9] J. J. Bird and A. Lotfi, “CIFAKE: Image Classification and Explainable Identification of AI-Generated Synthetic Images,” IEEE Access, vol. 12, pp. 15642–15650, 2024, doi: 10.1109/ACCESS.2024.3356122.

[10] M. A. Rahman, B. Paul, N. H. Sarker, Z. I. A. Hakim, and S. A. Fattah, “ArtiFact: A Large-Scale Dataset with Artificial and Factual Images for Generalizable and Robust Synthetic Image Detection,” in IEEE International Conference on Image Processing (ICIP), Feb. 2023, doi: 10.48550/arXiv.2302.11970.

[11] Y. Zhang, Z. Pang, S. Huang, C. Wang, and X. Zhou, “Unmasking AI-created visual content: a review of generated images and deepfake detection technologies,” Aug. 01, 2025, Springer International Publishing. doi: 10.1007/s44443-025-00154-8.

[12] S. Teilmann-Lock and A. Savin, “Beyond the AI-copyright Wars: Towards European Dataset Law?,” Computer Law & Security Review, vol. 58, 2025, doi: 10.1016/j.clsr.2025.106190.

[13] D. Ghiurău and D. A. Popescu, “Distinguishing Reality from AI: Approaches for Detecting Synthetic Content,” Computers, vol. 14, no. 1, 2025, doi: 10.3390/computers14010001.

[14] C. P. Tanujaya, “Analisis Karya Ciptaan Artificial Intelligence Menurut Undang-Undang Nomor 28 Tahun 2014 Tentang Hak Cipta”, Journal of Law Education and Business, vol. 2, no. 1, 2024, doi: 10.57235/jleb.v2i1.1763.

[15] S. Deniesa, D. R. Putri, A. Amrullah, A. binti M. Farid, and N. A. D. binti M. Hassan, “Copyright Protection for Creators of Digital Artwork,” Indonesian Comparative Law Review, vol. 4, no. 1, pp. 43–58, Sep. 2022, doi: 10.18196/iclr.v4i1.15106.

[16] J. Deng, W. Dong, R. Socher, L.-J. Li, Kai Li, and Li Fei-Fei, “ImageNet: A large-scale Hierarchical Image Database,” in 2009 IEEE Conference on Computer Vision and Pattern Recognition, IEEE, Jun. 2009, pp. 248–255. doi: 10.1109/CVPR.2009.5206848.

[17] S. S. Baraheem and T. V. Nguyen, “AI vs. AI: Can AI Detect AI-Generated Images?,” J Imaging, vol. 9, no. 10, p. 199, Sep. 2023, doi: 10.3390/jimaging9100199.

[18] Z. N. Ashani, I. S. C. Ilias, K. Y. Ng, M. R. K. Ariffin, A. D. Jarno, and N. Z. Zamri, “Comparative Analysis of Deepfake Image Detection Method Using VGG16, VGG19 and ResNet50,” Journal of Advanced Research in Applied Sciences and Engineering Technology, vol. 47, no. 1, pp. 16–28, May 2025, doi: 10.37934/araset.47.1.1628.

[19] R. A. Prawiratama, “Design of a Generative AI Image Similarity Test Application and Handmade Images Using Deep Learning Methods,” Jurnal Informatika dan Teknologi Informasi, vol. 20, no. 3, pp. 326–342, 2023, doi: 10.31315/telematika.v20i3.10096.

[20] S. W. Kusuma, F. Natalia, C. S. Ko, and S. Sudirman, “Detection of AI-Generated Anime Images Using Deep Learning,” ICIC Express Letters, Part B: Applications, vol. 15, no. 3, pp. 295–301, Mar. 2024, doi 10.24507/icicelb.15.03.295.

[21] I. T. Erwin, Abdul Latief Arda, Imran Taufik, Muhammad Erwin Rosyadi. S, and Hilyatul Auliyah Erwin, “Implementasi Model DeiT untuk Membedakan Gambar Buatan AI dan Manusia pada Ilustrasi Animasi 2D,” INTI Nusa Mandiri, vol. 19, no. 2, pp. 172–180, Feb. 2025, doi: 10.33480/inti.v19i2.6306.

[22] M. S. A. Aria, C. Slamet, and M. D. Firdaus, “Klasifikasi Fake dan Real Menggunakan Vision Transformer dan EfficientNet-B0 pada Gambar Asli dan Generatif AI,” SMATIKA JURNAL, vol. 15, no. 01, pp. 179–192, Jun. 2025, doi: 10.32664/smatika.v15i01.1531.

[23] X. Zhao, Y. Wu, G. Song, Z. Li, Y. Zhang, and Y. Fan, “A Deep Learning Model Integrating Fcnns And Crfs For Brain Tumor Segmentation,” Med Image Anal, vol. 43, 2018, doi: 10.1016/j.media.2017.10.002.

[24] K. Roy, D. Banik, D. Bhattacharjee, and M. Nasipuri, “Patch-based system for Classification of Breast Histology Images Using Deep Learning,” Computerized Medical Imaging and Graphics, vol. 71, 2019, doi: 10.1016/j.compmedimag.2018.11.003.

[25] N. Cordier, H. Delingette, and N. Ayache, “A Patch-Based Approach for the Segmentation of Pathologies: Application to Glioma Labelling,” IEEE Trans Med Imaging, vol. 35, no. 4, pp. 1066–1076, Apr. 2016, doi: 10.1109/TMI.2015.2508150.

[26] O. Ronneberger, P. Fischer, and T. Brox, “U-Net: Convolutional Networks For Biomedical Image Segmentation,” in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), Springer Verlag, pp. 234–241, 2015, doi: 10.1007/978-3-319-24574-4_28.

[27] R. Aulianita, A. Mukhayaroh, “Metode Rapid Application Development (RAD) Dalam Merancang Website Top Up,” Information Management for Educator and Professionals: Journal of Information Management, vol. 10, no. 1, pp83-92, 2025, doi: 10.51211/imbi.v10i1.3473.

[28] A. Toosi, S. Cumani, and A. Bottino, “CNN Patch–Based Voting for Fingerprint Liveness Detection,” in International Joint Conference on Computational Intelligence, 2017, doi: 10.5220/0006582101580165.

[29] J. V. Manjón et al., “MRI white matter lesion segmentation using an ensemble of neural networks and overcomplete patch-based voting,” Computerized Medical Imaging and Graphics, vol. 69, 2018, doi: 10.1016/j.compmedimag.2018.05.001.

[30] D. Murdiani and M. Sobirin, “Perbandingan Metodologi Waterfall dan RAD (Rapid Application Development) dalam Pengembangan Sistem Informasi,” 2022. doi: 10.51401/jinteks.v4i4.2008.

[31] D. Kurniawan and M. Yasir, “Optimization Sentimen Analysis using CRISP-DM and Naive Bayes Methods Implemented on Social Media,” Cyberspace: Jurnal Pendidikan Teknologi Informasi, vol. 6, no. 2, p. 74, Oct. 2022, doi: 10.22373/cj.v6i2.12793.

[32] C. E. D. Vanegas, J. C. G. Mejía, F. A. V. Agudelo, and D. E. S. Duran, “A Representation Based on Essence for the CRISP-DM Methodology,” Computación y Sistemas, vol. 27, no. 3, pp. 675–689, Sep. 2023, doi: 10.13053/cys-27-3-3446.

[33] A. Dharmaputra, M. Cahyanti, M. R. D. Septian, and E. R. Swedia, “Aplikasi Face Mask Detection Menggunakan Neural Network MobileNetV2 Berbasis Android,” Sebatik, vol. 25, no. 2, pp. 382–389, Dec. 2021, doi: 10.46984/sebatik.v25i2.1503.

[34] A. Voulodimos, N. Doulamis, A. Doulamis, and E. Protopapadakis, “Deep Learning for Computer Vision: A Brief Review,” Comput Intell Neurosci, vol. 2018, 2018, doi: 10.1155/2018/7068349.

[35] M. Sandler, A. Howard, M. Zhu, A. Zhmoginov, and L.-C. Chen, “MobileNetV2: Inverted Residuals and Linear Bottlenecks,” Mar. 2019, doi: 10.48550/arXiv.1801.04381.

Downloads

Published

Issue

Section

License

Copyright (c) 2025 Cintha Hafrida Putri (Author)

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.